This is a note of applied machine learning workshop RStudion conference 2020

Why is it hard to predict (domain knowledge).

purrr::map allows inline code.

purrr::map and tidyr::nest covered because they are used in resample or tune.

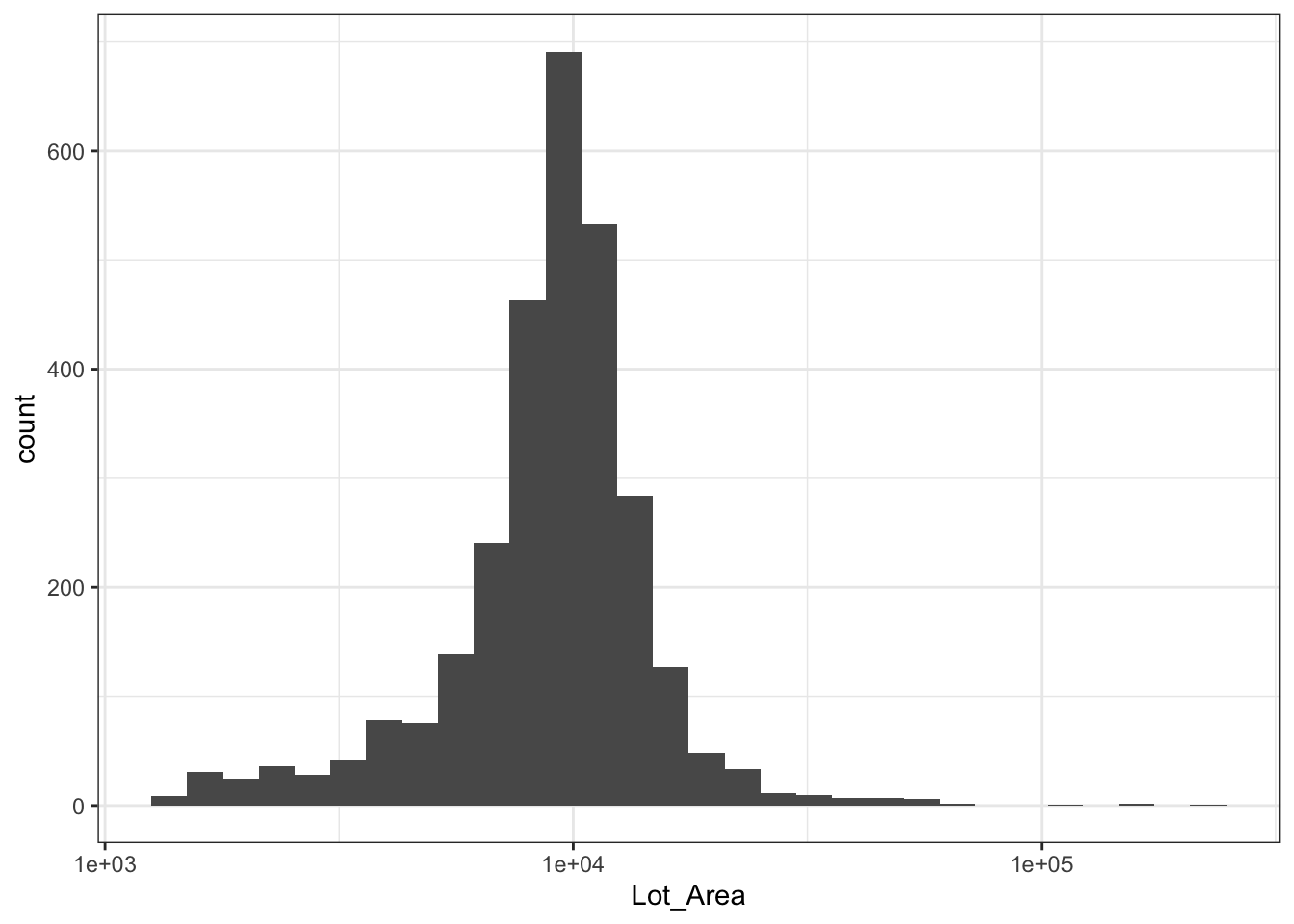

Skew data might be looking outlier.

People look at data in many different ways like outliers, missingness, correlation, and suspicion of an important variable.

The ggplot is good to explore variables adding geoms changing plot.

Machine learning is creative because you can do many different ways such as which variable should be included.

Model workflow: imputation -> transformation -> filter -> model

Resampling avoids something like the garden of forking paths or p-hacking by honest feedback.

The tuning parameter can be estimated analytically or iteratively.

Single validation set ??

Resampling process questions

Splitting data is confusing.

The test set split preserves distribution.

Interval and sampling are behind the scene of resampling.

fomular x ~ . -something to remove

Variable role: limited (can not be applied to hierarchical in Baysian??)

Formula and XY interface is not fit in machine learning. The recipes package is a solution.

The broom::glance (one-row summary of metrics, don’t trust this too much) tidy (coefficients) augment (by data points)

The parsnip (unified interface) is a solution to a different interface.

How to set specific settings using parsnip?

Many other packages supporting parsnip. Look at the tidymodels packages.

What is the difference between caret and parsnip?

Caret~base R, parsnip~tidyverse

The parsnip generalizes the interfaces and is easy to extend.

A dummy variable is confusing in the interaction of terms and how to interpret coefficiency.

The level with 0,0 allocation becomes a base variable in factor variable. A continuous variable can be categorized with recipe::step_discritize().

A zero variance predictor includes no record or single value variable.

Feature engineering problem

* Dummy variable

* Zero variance

* Standardize

Customizing Step function

The first level is a reference. There are ways to change the reference level. (Ordering factor level issues)

People respond positively to the recipes.

recipe -> prep -> juice/bake; define -> calculate -> processing (training or test set)

The post-processed data set goes to fit() to establish a model

Versioning model caching?

mod_rec <- recipe(

Sale_Price ~ Longitude + Latitude + Neighborhood,

data = ames_train

) %>%

step_log(Sale_Price, base = 10) %>%

# Lump factor levels that occur in

# <= 5% of data as "other"

step_other(Neighborhood, threshold = 0.05) %>%

# Create dummy variables for _any_ factor variables

step_dummy(all_nominal()) %>%

step_nzv(

starts_with ("Neighborhood_"))

preped_data <- prep(mod_rec)

preped_data %>%

juice() %>%

slice(1:5)## # A tibble: 5 x 11

## Longitude Latitude Sale_Price Neighborhood_Co… Neighborhood_Ol…

## <dbl> <dbl> <dbl> <dbl> <dbl>

## 1 -93.6 42.1 5.24 0 0

## 2 -93.6 42.1 5.39 0 0

## 3 -93.6 42.1 5.28 0 0

## 4 -93.6 42.1 5.29 0 0

## 5 -93.6 42.1 5.33 0 0

## # … with 6 more variables: Neighborhood_Edwards <dbl>,

## # Neighborhood_Somerset <dbl>, Neighborhood_Northridge_Heights <dbl>,

## # Neighborhood_Gilbert <dbl>, Neighborhood_Sawyer <dbl>,

## # Neighborhood_other <dbl>step_dummy -> step_other/step_nzv()

Step_dummy creates many dummy variables thus you should include the new dummy variables into the interaction terms or others or nzv. with starts_with() function.

Resolving skewness: Box-cox, inverse, of course, log or square root

parsnip model object includes original model results in $fit.

The workflow replaces prep() -> juice() -> fit() with single call fit() and bake() -> predict() with predict ().

The workflow needs adding model specification (parsnip) and preprocessing (recipes).

Resampling is the best option to estimate the performance of a model.

Resampling splits analysis set and assessment set on training set. The final result is performance metrics.

Selecting the resampling method relates to bias-variance trade-off. (Repeated CV)

https://en.wikipedia.org/wiki/Model_selection#Criteria information criteria??

Resampling does stratified sampling.

The tuning metric tends to be smooth. The irregular metric result means high variance.

http://appliedpredictivemodeling.com/blog/2014/11/27/vpuig01pqbklmi72b8lcl3ij5hj2qm

Resampling spends memory. The vfold_cv() has a copy of the original data.

Processing first or resampling first??

Resample first

If preprocessing is outside of resampling, You don’t know how the test set will be predicted.

Is feature engineering stable or unstable?

Can tune package select a good preprocessing process? Yes, it can.

The upsampling issues and rare important data points

The tuning hyperparameter (underfitting or overfitting).

Hyperparameter types grid (regular, irregular), iterative

Making function name: use underbar _ “Grid_regular”

The pros and cons of regular and non-regular grid

The non-regular grid efficient except some model can do trick.

The parsnip standardizes and it changes default parameter ranges.

The parameter tibble can not be subsetted. It will be issued in GitHub.

People take care of the default value of parameters.

Tidymodel delays evaluation. Set first and run later.

The Splines fitting is wagged at the edge of the range. When trying to predict value out of range, warnings occur. Resampling can cause an error at the edge point of the assessment set.

There is a note column in the result object.

The sensitivity of parameters can be evaluated with ggplot. The scale should be adjusted when an outlier is present.

Best fit can be choose

Documentation

Show best and select best (parameter)

Tune tunes selected parameters. If you don’t tune parameters, the parameters go default value.

The log ridership is wired because of a bimodal distribution. It will show skewed residual or something.

!!stations indicates the environment of the station object, the station object is defined at recipe object, not the global environment.

Regularization wins wrapping feature selection methods in terms of efficacy.

If two predictors are highly correlated, the signs can change and the variance of coefficients inflates.

L1 penalty does feature selection, the L2 penalty resolves correlation. Does mixture feature selection? Yes, the L1 component and lambda determine how many variables are kicked out, except pure ridge regression (ie. pure L2 penalty).

Normalize or standardize on dummy variables?

Lambda is a more important parameter than alpha in glm.

Parallelism issue. GPU is good at linear algebra. Parallelism consumes memory because they copy data. Tuning is advantaged with parallelism because it uses for a loop. Unix is better than Windows in terms of parallelism.

Inner join makes prediction tibble subsetting best.

Repeated CV residual can be estimated with average predicting values.

GCV is used to pruning the point.

Is MARS greedy? Semi-greedy.

MARS is struggling with colinearity. Use step_pca.

Bayesian process, Gaussian process, Kernel function selection

MARS hyperparameter space is high dimensional, thus the Gaussian process is better than the grid method in terms of efficiency. Grid methods for the Chicago data MARS model need more than 4000 points of the grid, although the grid search can use a parallel process.

The Bayesian parameter searching process can be updated and pause.

Classification Hard prediction vs soft prediction (probability)

Accuracy can be estimated with hard prediction and ROC can be produced on soft prediction.

Feature hashing is difficult to understand.

recipe can be tune.

C5.0 is boost_tree.

Gitter discussion https://gitter.im/conf2020-applied-ml/community/archives/2020/01/28